Abstract

Animals don’t move by stringing together independent actions; they fluidly switch between behavioral motifs, each governed by its own dynamics. Premotor and basal ganglia circuits select which behavior to initiate and set its initial conditions, while the primary motor cortex evolves that behavior forward as a dynamical system. Yet computational approaches to modeling naturalistic behavior remain fragmented. While probabilistic state-space models (SSMs) can segment discrete behavioral syllables from keypoint data and reinforcement learning (RL) drives lower-level physics control, there exist no principled bridge between them. We introduce Code2Act, a Switching Nonlinear Dynamical System (S-nLDS) that unifies these levels within a single imitation framework. Built on observables from MIMIC-MJX (Zhang & Yang et al. 2025), a biomechanical simulation of naturalistic rodent behavior, our model replaces the linear regime dynamics of classical switching linear dynamical systems with a RNN trained in RL environment, capable of capturing the nonlinear, non-Gaussian structure of real motor control. The result is a system that jointly segments continuous behavior into discrete semantic units and generates long, biomechanically realistic motor sequences, closing the loop between behavioral understanding and motor generation in a way that mirrors the hierarchical dynamical organization of biological motor control.

Motivation

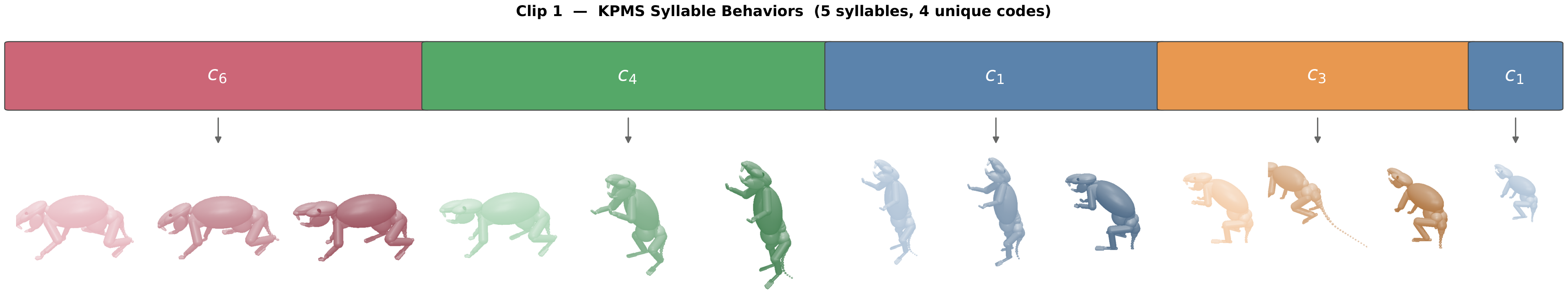

Keypoint-MoSeq and similar probabilistic SSMs segment continuous behavior into discrete syllables, fine-grained motifs that may not be interpretable alone, but whose sequences paint a coarser picture from which coherent, identifiable behavior emerges. Yet these models operate on keypoint dynamics: they describe what behavior looks like, not how it is physically produced, and cannot serve as generative models of biomechanically realistic trajectories. Reinforcement learning, on the other hand, excels at physics-based motor control, yet the latent representations learned by end-to-end imitation policies are typically unstructured, entangled, and not reusable for structured behavioral generation.

Code2Act bridges this gap by treating discrete syllable codes as the interface between behavioral segmentation and embodied motor control.

KPMS Code-to-Action Mapping. Discrete syllable codes from Keypoint-MoSeq are mapped through the Code2Act decoder to produce continuous motor commands that drive whole-body biomechanical control.

Pipeline Overview

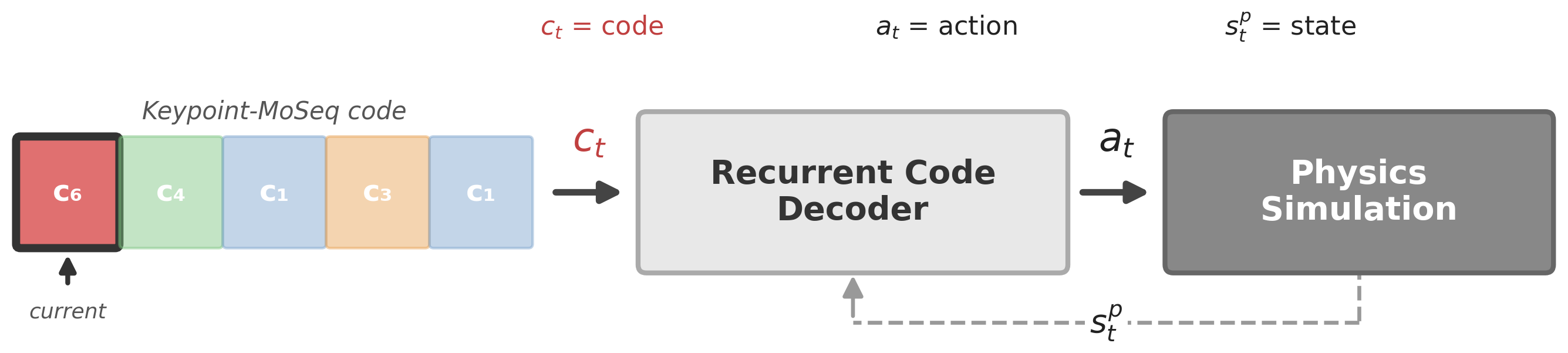

Code2Act Overview. Discrete syllable codes from Keypoint-MoSeq drive a recurrent code decoder that produces motor actions; proprioceptive state feeds back from the physics simulation to close the loop.

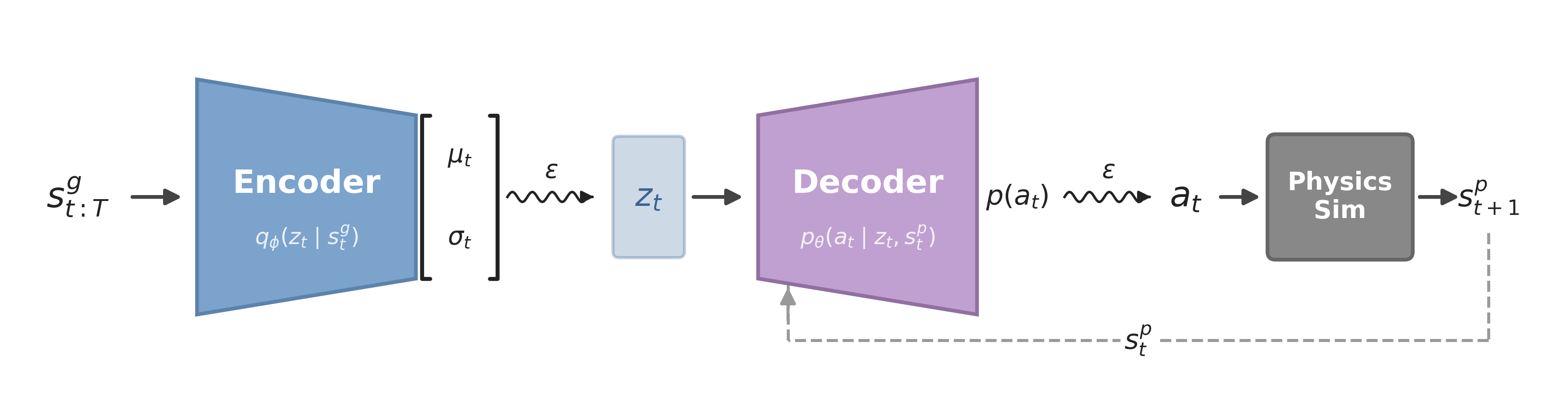

Code2Act follows a prior distillation architecture. First, MIMIC-MJX trains an encoder-decoder VAE policy to imitate naturalistic rodent trajectories from inverse kinematics, producing motor commands for a biomechanical rodent body in MuJoCo MJX.

MIMIC-MJX Inverse Dynamics Model Training. The base imitation learning framework trains an encoder-decoder VAE policy to track reference trajectories from inverse kinematics, producing motor commands that drive a biomechanical rodent body in MuJoCo MJX.

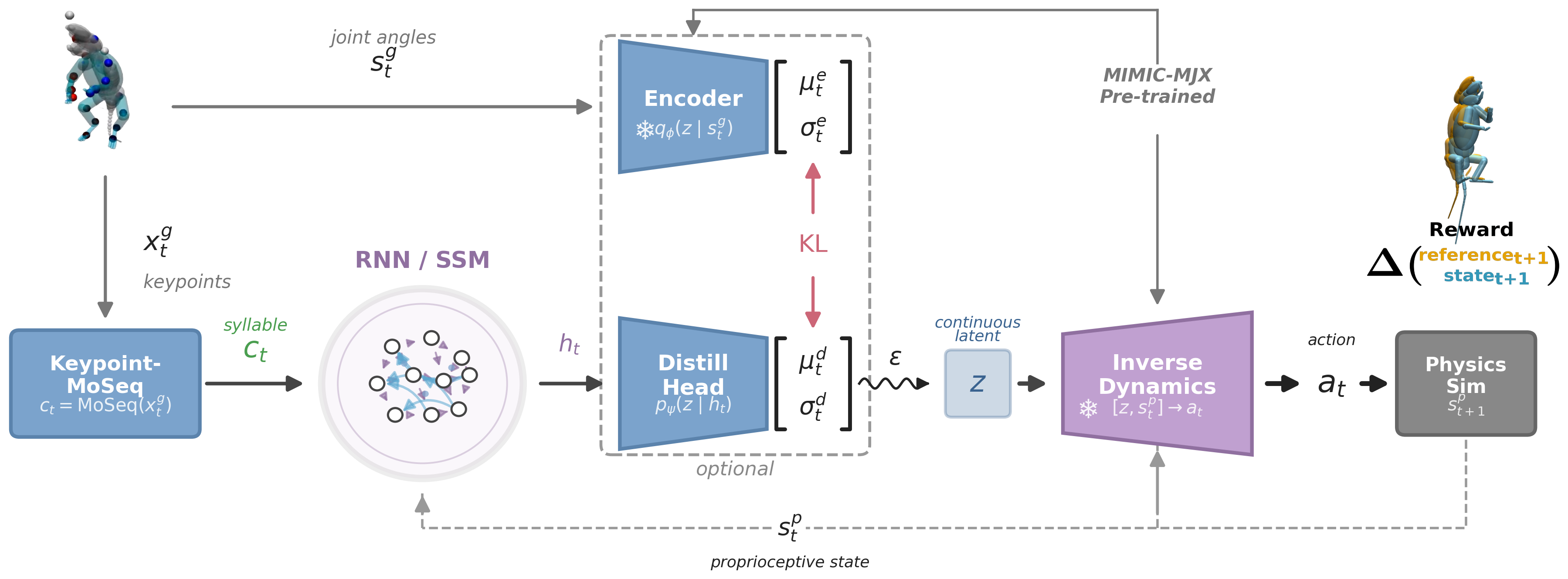

Code2Act then distills this pretrained encoder into a code-conditioned controller. Keypoint-MoSeq segments reference trajectories into discrete syllable codes. A GRU-based RNN decoder takes syllable code embeddings and proprioceptive feedback as input, producing hidden states that a unified VAE head maps to the pretrained encoder’s latent space. The pretrained decoder translates these latent samples into motor commands. During training, a frozen encoder provides KL alignment targets, ensuring the RNN learns to reproduce the encoder’s latent dynamics from syllable codes alone.

Code2Act Pipeline. Keypoint-MoSeq segments reference trajectories into discrete syllable codes. A GRU-based RNN decoder takes (code embedding, proprioception) as input and produces hidden states. A unified VAE head maps the hidden state to a latent distribution (μ, σ), from which z is sampled and fed through the pre-trained VAE decoder to produce motor commands. During training, a frozen pre-trained encoder provides KL alignment targets.

Results

Imitation from Coarse Syllable Codes

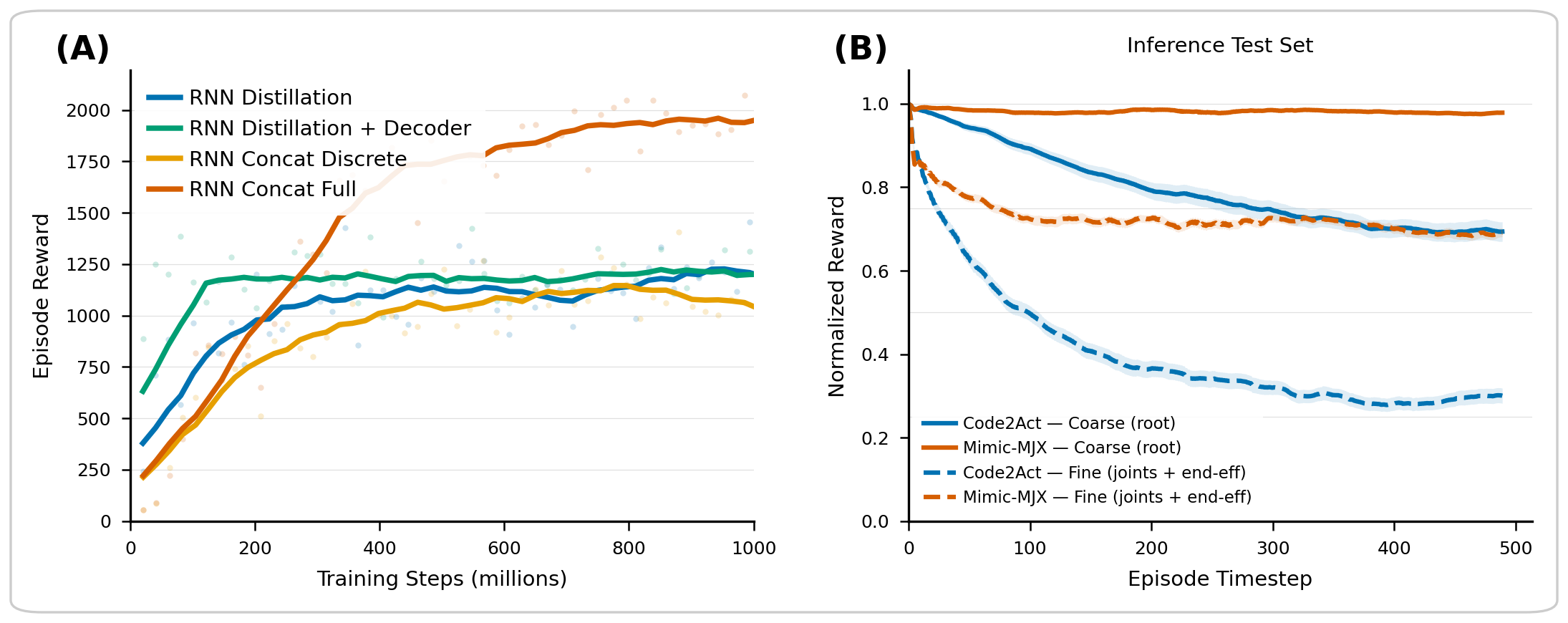

Code2Act achieves strong imitation from coarse syllable information alone. Given a KPMS code sequence, the actual trajectory could unfold in many different ways, so tracking reward serves as a proxy for learning the internal dynamics of each syllable rather than as an end goal. Two key findings emerge: (A) step-wise distillation best captures internal dynamics, and (B) learning these dynamics does not require achieving high tracking reward.

(A) Training curves comparing RNN Distillation, RNN Distillation + Decoder, RNN Concat Discrete, and RNN Concat Full architectures. (B) Reward decomposition on 250-frame generalization set: Code2Act (blue) vs. Mimic-MJX oracle (orange). Solid = coarse tracking (root), dashed = fine tracking (joints + end-effectors).

Behavioral Repertoire

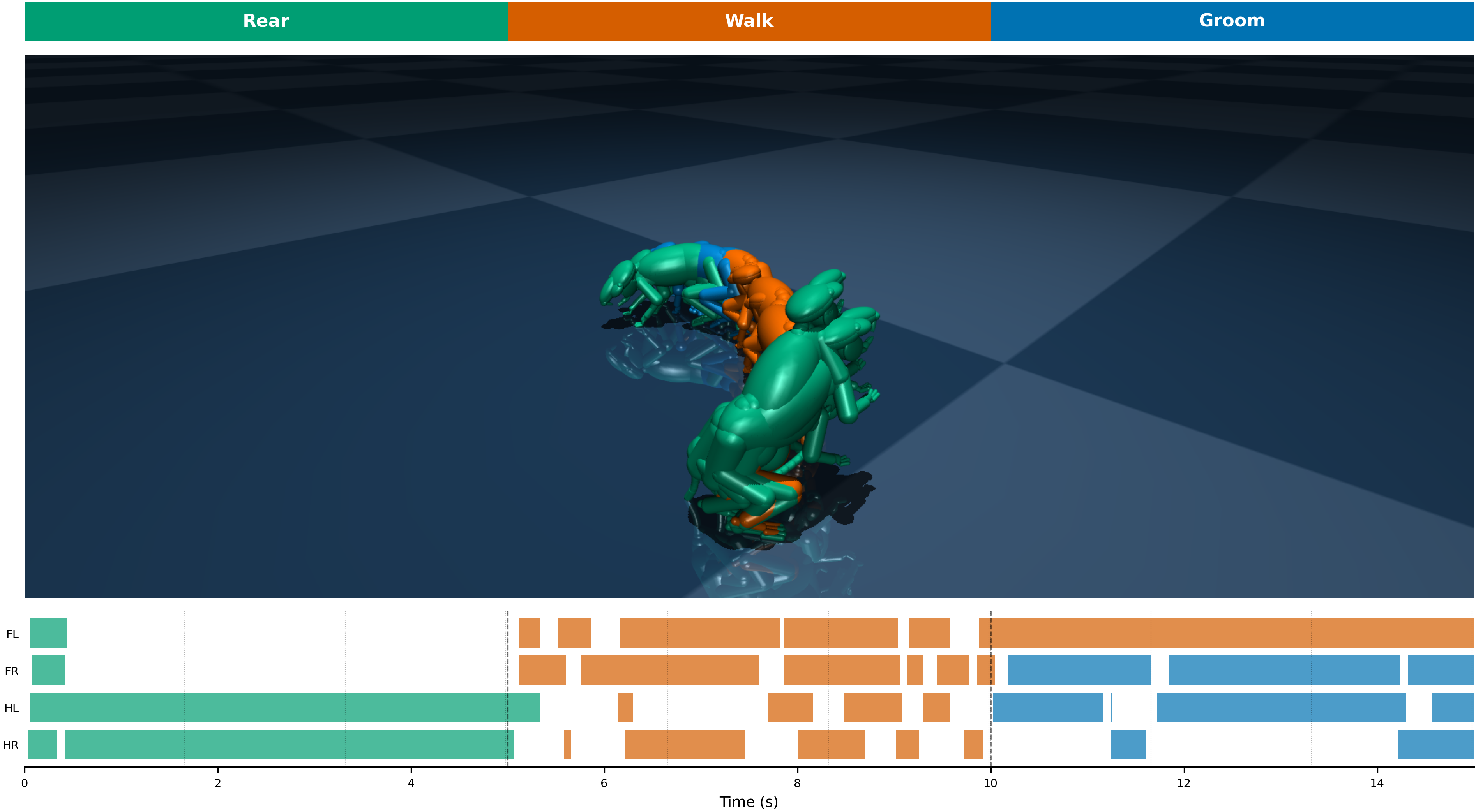

The behavior parade shows the full repertoire that Code2Act produces. All bodies receive the same code sequence (walk → immobility → rear) but start from different poses, demonstrating consistent, biomechanically realistic dynamics regardless of initial conditions.

Behavior Parade (Side View). All bodies receive the same KPMS code sequence but start from different instantiated positions, producing consistent dynamics regardless of initial pose.

Behavior Parade (Motion Trail). Frozen copies of a single body performing a rear → walk → immobility transition, with a behavior-colored trajectory trace. Each copy is sampled at evenly-spaced timesteps within its behavior phase, showing how the decoder transitions between distinct motor programs.

From Syllables to Behavior

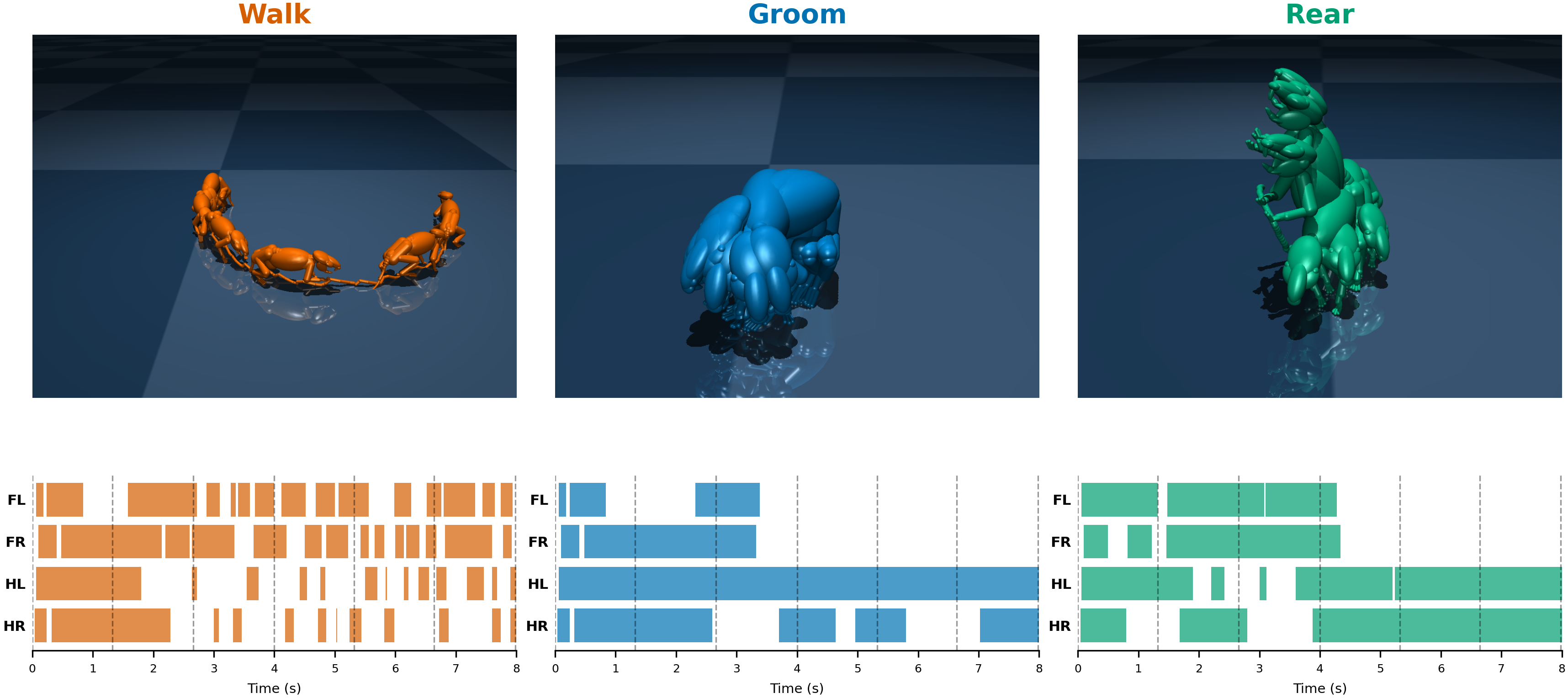

Keypoint-MoSeq (KPMS) discovers a vocabulary of behavioral syllables directly from data — not coarse human labels like “walking” or “grooming,” but fine-grained, sub-second motifs: a single stride fragment, a head turn, a subtle postural shift. On their own, these syllables are abstract — short segments in a statistical decomposition. Code2Act makes them tangible. By feeding a single syllable code into the embodied controller and holding it constant, we can watch what that syllable actually does to a physically simulated body. A code that appeared as a brief cluster boundary in KPMS becomes a sustained gait cycle, a stable postural hold, or a rhythmic head movement playing out in real physics (see Within-Syllable Hidden Dynamics for the corresponding internal state trajectories).

The ghost-body video below shows this: multiple trajectories overlaid, all driven by the same behavioral code but from different initial conditions. Each code reliably produces its characteristic motor pattern regardless of starting pose.

Behavior Instantiation. K=6 trajectories per behavior (Immobility | Walk | Rear), all from the same starting pose. Each colored body is an independent rollout driven by the same KPMS code sequence.

Motion Trails. Frozen-copy trails for each behavior (Immobility | Walk | Rear). Walk codes produce extended forward trajectories, immobility codes remain stationary, and rear codes show vertical displacement with minimal horizontal drift.

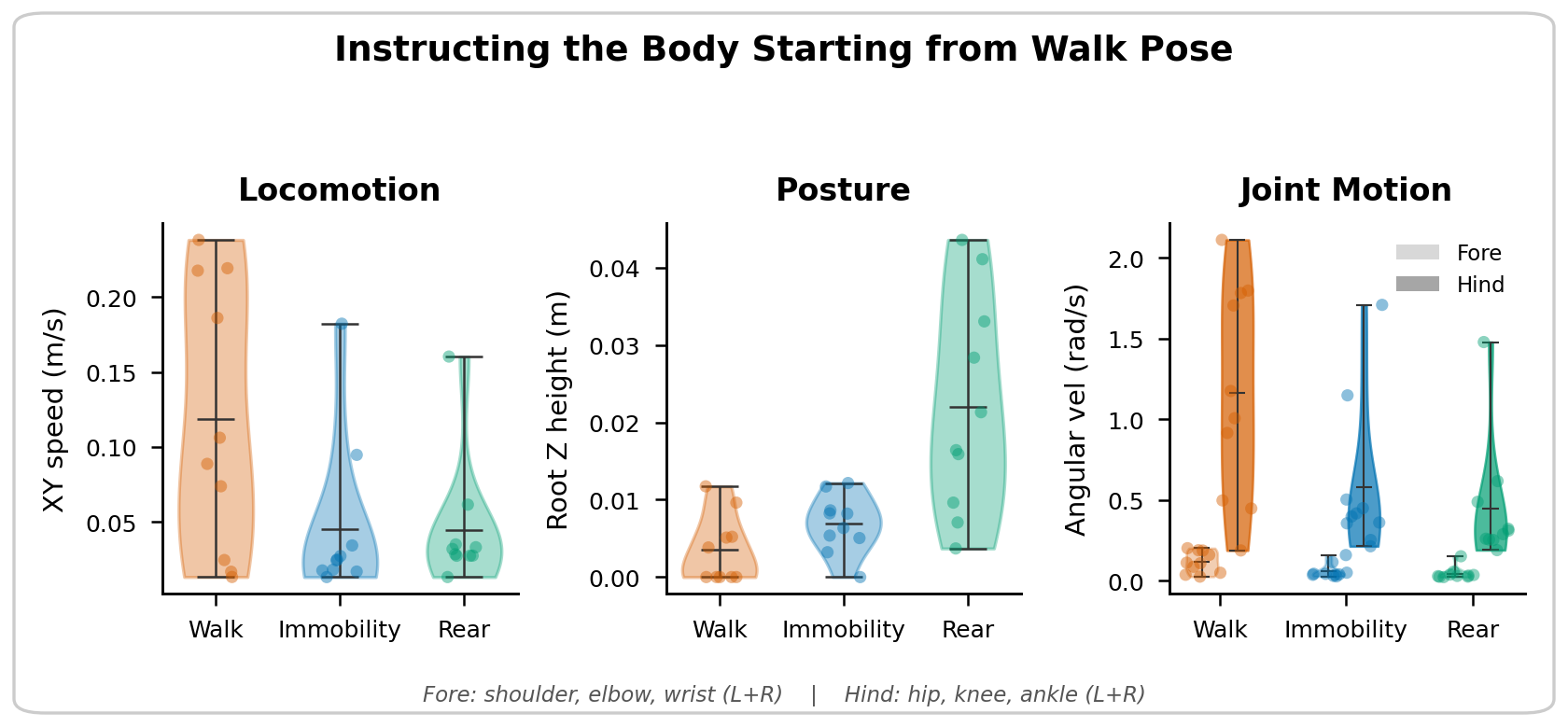

But individual syllables only tell part of the story. Real behavior is a conversation — a fluid sequence of syllables, not a single repeated word. When we feed Code2Act a natural sequence of KPMS codes extracted from real data, the transitions between syllables produce coherent, naturalistic movement: a walk that slows into a pause, a postural shift that flows into grooming. The kinematic signatures below confirm that code sequences are instructional at both coarse and fine scales, with each syllable category driving distinct motor dynamics across limbs.

Kinematic Signatures. Violin plots of XY speed, root Z height, and forelimb/hindlimb angular velocity per behavior. Walk codes produce the highest locomotion and joint activity; rear codes drive vertical posture with strong hindlimb engagement; immobility codes remain stationary. Individual dots = per-clip values.

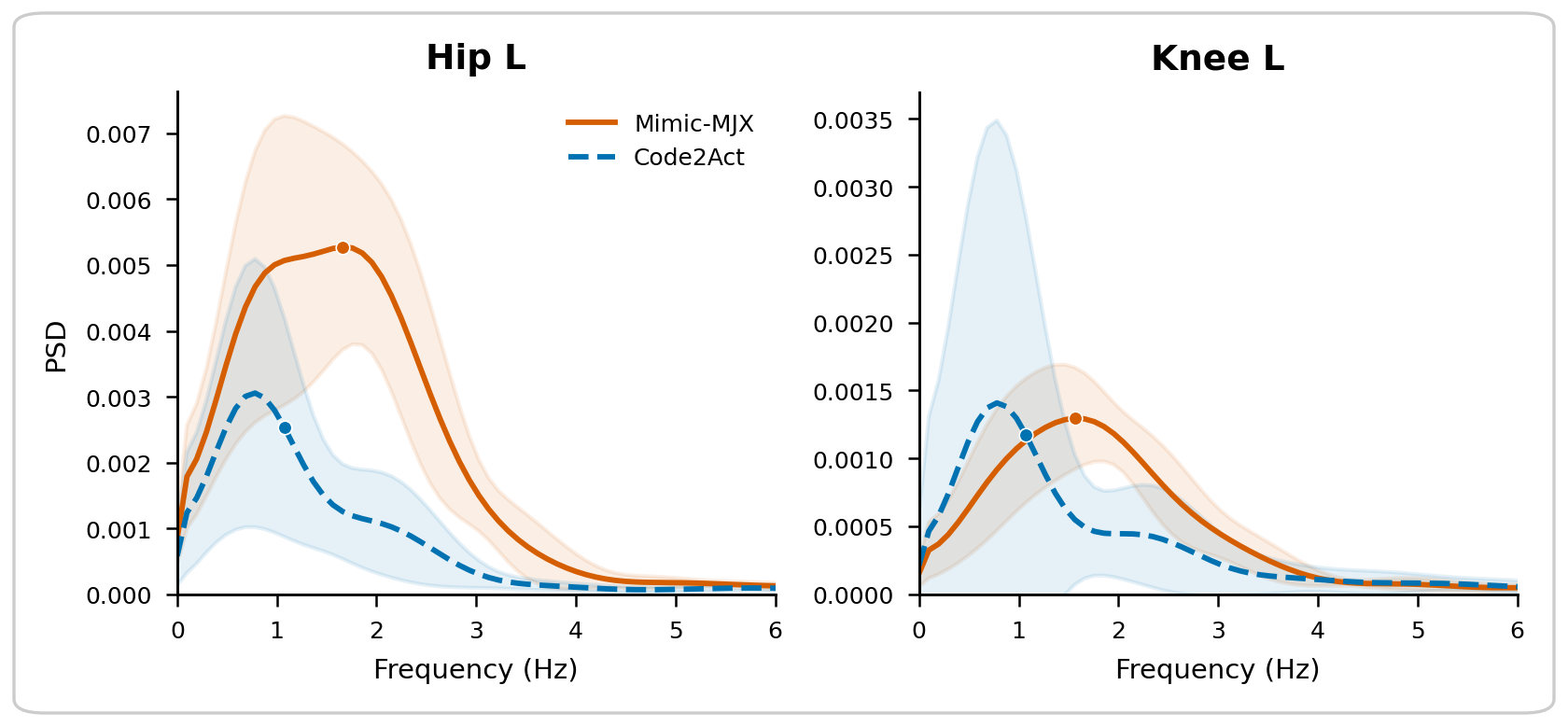

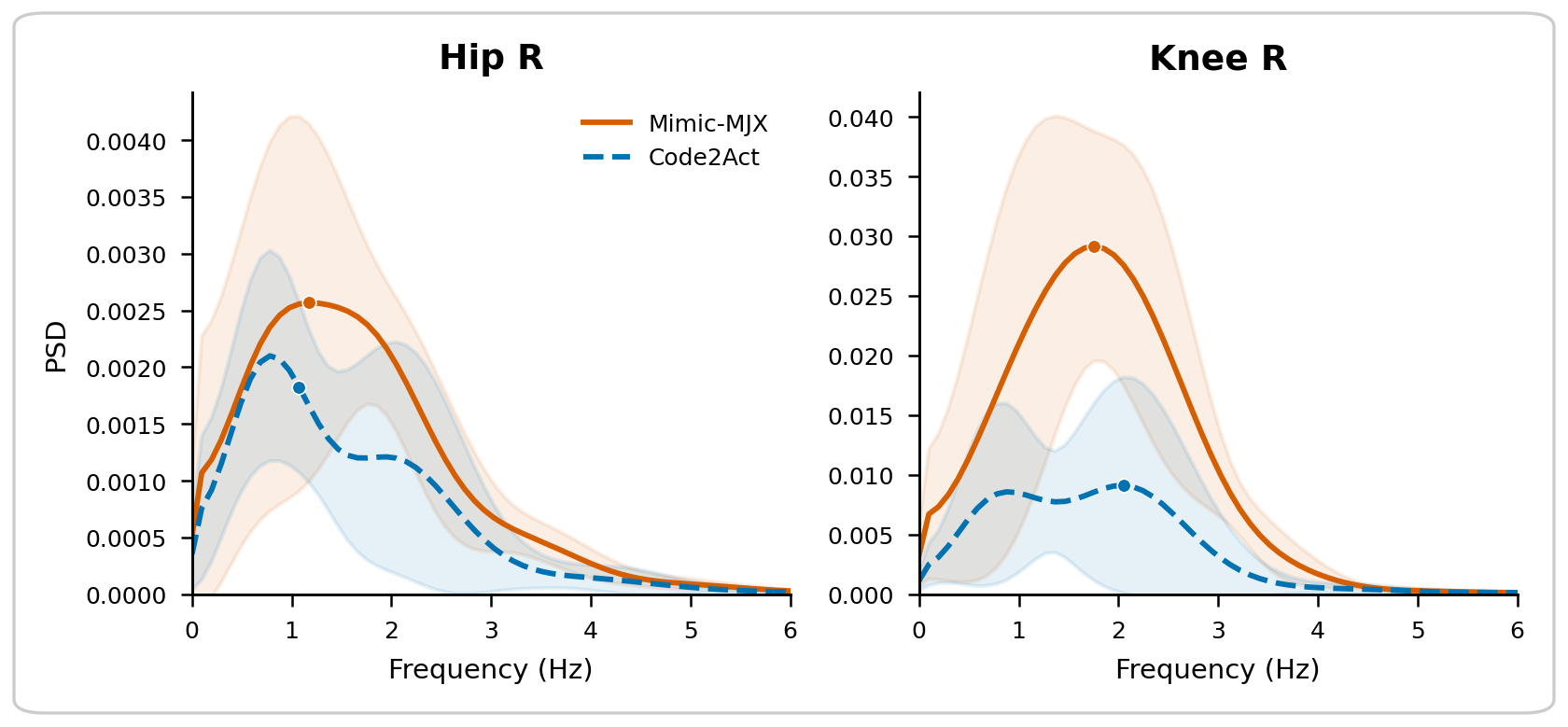

Code2Act also preserves the fine temporal structure of locomotion. The power spectral density of hind limb joint angles shows that Code2Act captures the dominant ~2 Hz gait frequency bilaterally, with proximal joints (hip) showing tight spectral overlap with Mimic-MJX and distal joints (knee) showing expected divergence.

Left hind limb. PSD comparing Code2Act (blue, dashed) and Mimic-MJX (orange) during walking. Hip peaks align closely; knee shows broader divergence.

Right hind limb. Same pattern: dominant gait frequency preserved at hip, with expected fidelity loss at knee. Bilateral symmetry is maintained.

Within-Syllable Hidden Dynamics

Although individual syllable codes do not map one-to-one to pure behaviors — they are learned from continuous reference data — the RNN decoder learns distinct dynamical regimes per syllable. PCA of the GRU hidden state reveals that walking codes drive extended, looping trajectories through hidden state space reflecting rhythmic gait cycles; immobility codes collapse to a tight, low-variance region; and rearing codes trace compact arcs corresponding to transient lift-and-hold dynamics. These trajectories occupy separable regions of hidden state space, confirming that the decoder internalizes qualitatively different dynamics per syllable rather than routing all behaviors through a shared dynamical regime.

Within-syllable hidden state dynamics. Left: 3D PCA of GRU hidden states progressing in sync with the rollout, rotating to reveal the structure. Right: 10 bodies per code, all starting from random poses. Each KPMS code is held constant for 250 frames. Walking codes (orange) drive extended, looping trajectories through hidden state space; immobility codes (blue) converge to a compact cluster; rearing codes (green) trace distinct arcs. Despite starting from diverse initial conditions, trajectories under the same code converge to the same region, showing that each code activates a reproducible motor program in the recurrent hidden state.

Planning in Syllable Space

By internalizing the dynamics of each syllable from physics-based training, Code2Act reframes behavior generation as planning in syllable space rather than rollout in a simulator. Because these dynamics are grounded in an embodied controller, each syllable captures how behavior is produced, not just what it looks like at the keypoint level.

We fit generative models (empirical transition matrix, HMM, ARHMM) to KPMS code sequences and use them to generate novel sequences. These generate coherent, free-running whole-body behavior without any reference trajectory.

Free-loop generation. Novel code sequences sampled from learned generative models (Transition Matrix | HMM | ARHMM) produce coherent whole-body behavior. No reference trajectory is provided; the decoder autonomously translates the generated code sequence into motor commands.

Baseline comparison. Code sequences generated from baseline models compared against Code2Act. The baseline, given random code sequences, produces no meaningful movement, confirming that coherent behavior requires structured code sequences rather than arbitrary syllable inputs.

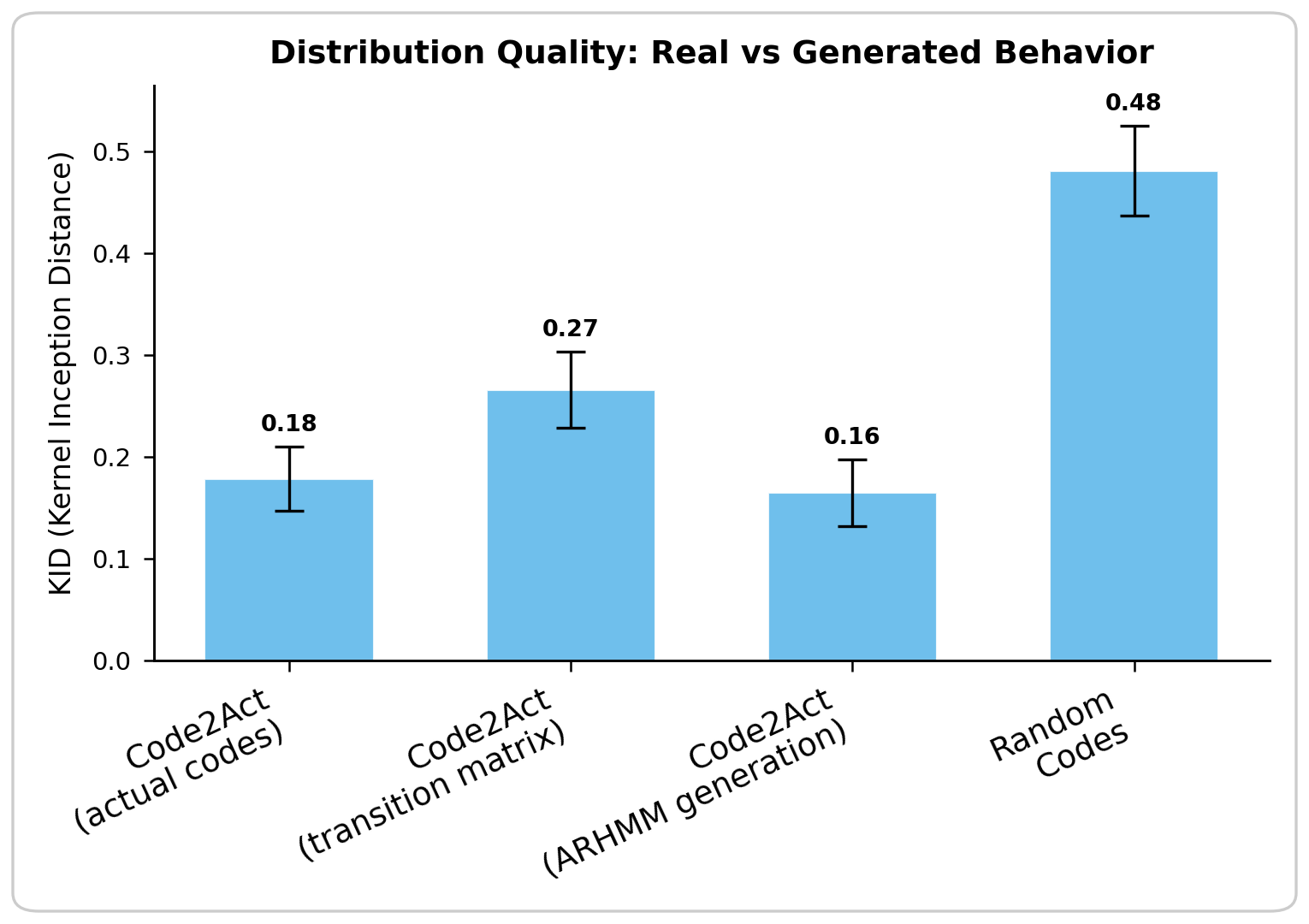

To quantify how realistic the generated behavior is, we compute Kernel Inception Distance (KID), which measures how closely generated behavior matches real behavior in the feature space of a VAE trained on Mimic-MJX trajectories. All three generative approaches achieve low KID, confirming that planning in syllable space produces distributionally realistic whole-body behavior regardless of the code generation strategy.

Distribution Quality: Real vs Generated Behavior. KID (lower is better) comparing Code2Act with actual KPMS codes, transition matrix sampling, ARHMM generation, and random codes. All structured code generation methods produce behavior distributionally close to real, while random codes diverge sharply. Error bars = std across 3 VAE seeds; 200-frame clips.

Generalization to Unseen Data

The results above were evaluated on the training distribution. A critical test is whether Code2Act generalizes to entirely new behavioral recordings that neither the decoder nor KPMS have seen during training. We sampled 20 long segments from a held-out continuous recording, extracted KPMS syllable codes from the new data, and ran both Code2Act and Mimic-MJX on the same segments.

Generalization comparison. Real reference trajectories (left), Mimic-MJX (center), and Code2Act (right) on unseen continuous data, with 10 ghost bodies per panel. Code2Act generates behavior from KPMS syllable codes alone, without access to the reference trajectory.

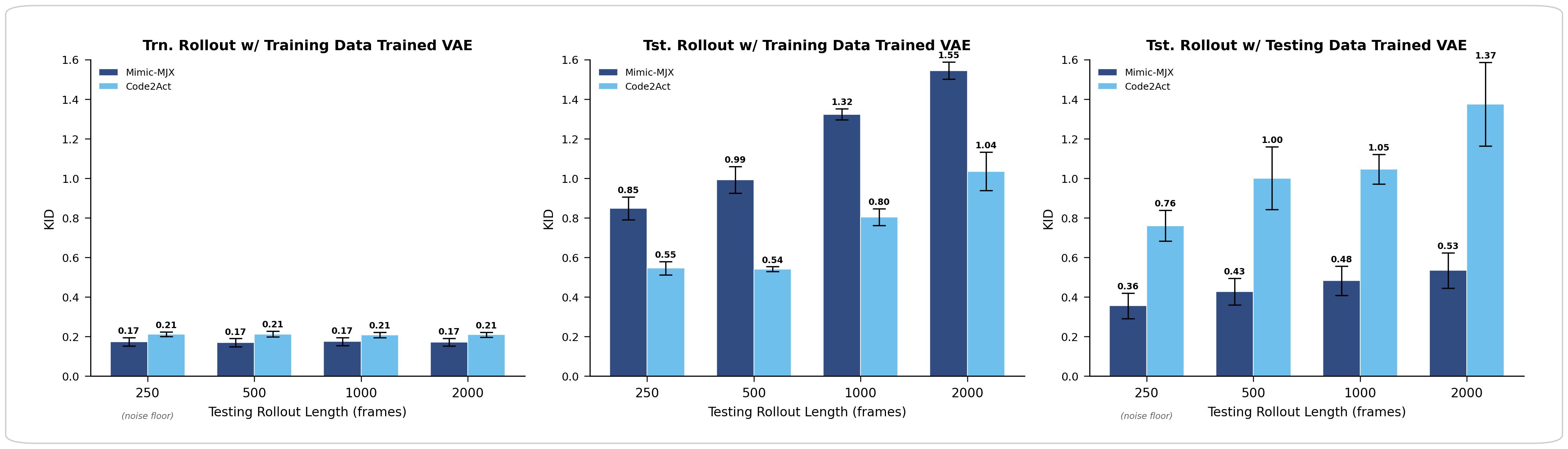

To quantify this, we trained two VAE feature extractors — one on training-data mimic rollouts and one on testing-data mimic rollouts — and computed KID at four rollout lengths (250, 500, 1000, and 2000 frames). The three panels below show complementary views of the same experiment.

Generalization KID across rollout lengths. Lower is better. Dark blue = Mimic-MJX, light blue = Code2Act. Left: in-distribution baseline (training rollouts evaluated against training-data VAE) — both methods perform near the noise floor. Middle: generalization rollouts against training-data VAE — Code2Act is consistently closer to the training distribution. Right: generalization rollouts against testing-data VAE — Mimic-MJX is closer to its own generalization distribution (as expected), but Code2Act follows a similar scaling trend. Error bars = std across 3 VAE seeds.

On training data (left panel), both methods reproduce the reference distribution near the noise floor — this confirms both policies are well-trained. On generalization data (middle panel), Code2Act outperforms Mimic-MJX at every rollout length, and the gap widens with duration. This is because Mimic-MJX was trained on 250-frame clips: as rollouts extend beyond this horizon, it accumulates drift at clip boundaries. Code2Act’s discrete syllable interface provides a natural abstraction — each code maps to its learned motor program regardless of duration. The right panel provides a complementary view: when the VAE is trained on generalization-data mimic rollouts, Mimic-MJX is naturally closer to its own distribution, but Code2Act tracks a similar scaling trend, confirming that the syllable bottleneck produces behaviorally coherent motion even on unseen data.

Conclusion

Code2Act demonstrates that discrete behavioral syllables, grounded in physics-based motor control, serve as a sufficient interface for generating naturalistic whole-body behavior. By distilling the dynamics of each syllable from embodied training, the system internalizes how behavior is produced, not just what it looks like. This turns behavior generation into a problem of planning in syllable space: sequencing discrete codes rather than rolling out a physics engine.

The result bridges two historically separate approaches, probabilistic state-space models that capture behavioral structure and reinforcement learning that produces motor control, by using syllable codes as the interface between structure and action. Code2Act provides both an engineering solution for planning behavioral sequences without physics, and a generative modeling framework for synthesizing whole-body behavior through syllable code sequences.

Citation

@article{code2act2026, title={Code to Action: Motor Programs from Discrete Syllables in Whole-Body Biomechanical Control}, author={Bian, Kaiwen and Zhang, Charles Y. and Yang, Yuanjia and Sirbu, Aidan and Jha, Aditi and Buchanan, Kelly and Leonardis, Eric J. and Richards, Blake A. and {\"O}lveczky, Bence P. and Linderman, Scott W. and Pereira, Talmo D.}, journal={arXiv preprint}, year={2026}}